RankSRGAN: Generative Adversarial Networks with Ranker for Image Super- Resolution

Abstract

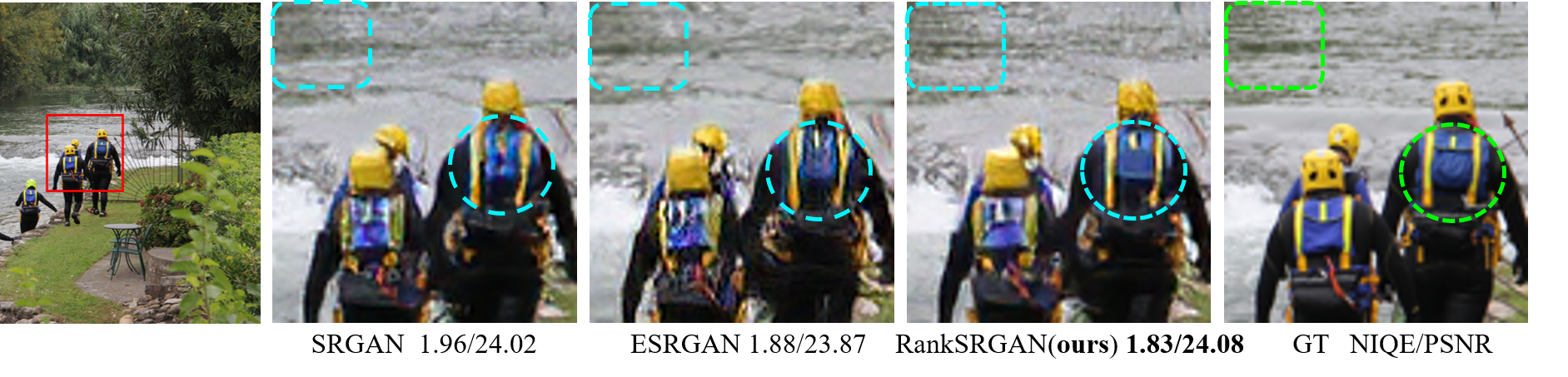

Generative Adversarial Networks (GAN) have demonstrated the potential to recover realistic details for single image super-resolution (SISR). To further improve the visual quality of super-resolved results, PIRM2018-SR Challenge employed perceptual metrics to assess the perceptual quality, such as PI, NIQE, and Ma. However, existing methods cannot directly optimize these indifferentiable perceptual metrics, which are shown to be highly correlated with human ratings. To address the problem, we propose Super-Resolution Generative Adversarial Networks with Ranker (RankSRGAN) to optimize generator in the direction of perceptual metrics. Specifically, we first train a Ranker which can learn the behavior of perceptual metrics and then introduce a novel rank-content loss to optimize the perceptual quality. The most appealing part is that the proposed method can combine the strengths of different SR methods to generate better results. Extensive experiments show that RankSRGAN achieves visually pleasing results and reaches stateof-the-art performance in perceptual metrics.

Contributions

- We propose a general perceptual SR framework – RankSRGAN that could optimize generator in the direction of indifferentiable perceptual metrics and achieve the state-of-the-art performance.

- We, for the first time, utilize results of other SR methods to build the training dataset. The proposed RankSRGAN could combine the strengths of different SR methods and generate better results.

- The proposed SR framework is highly flexible and could produce diverse results given different rank datasets, perceptual metrics, and loss combinations.

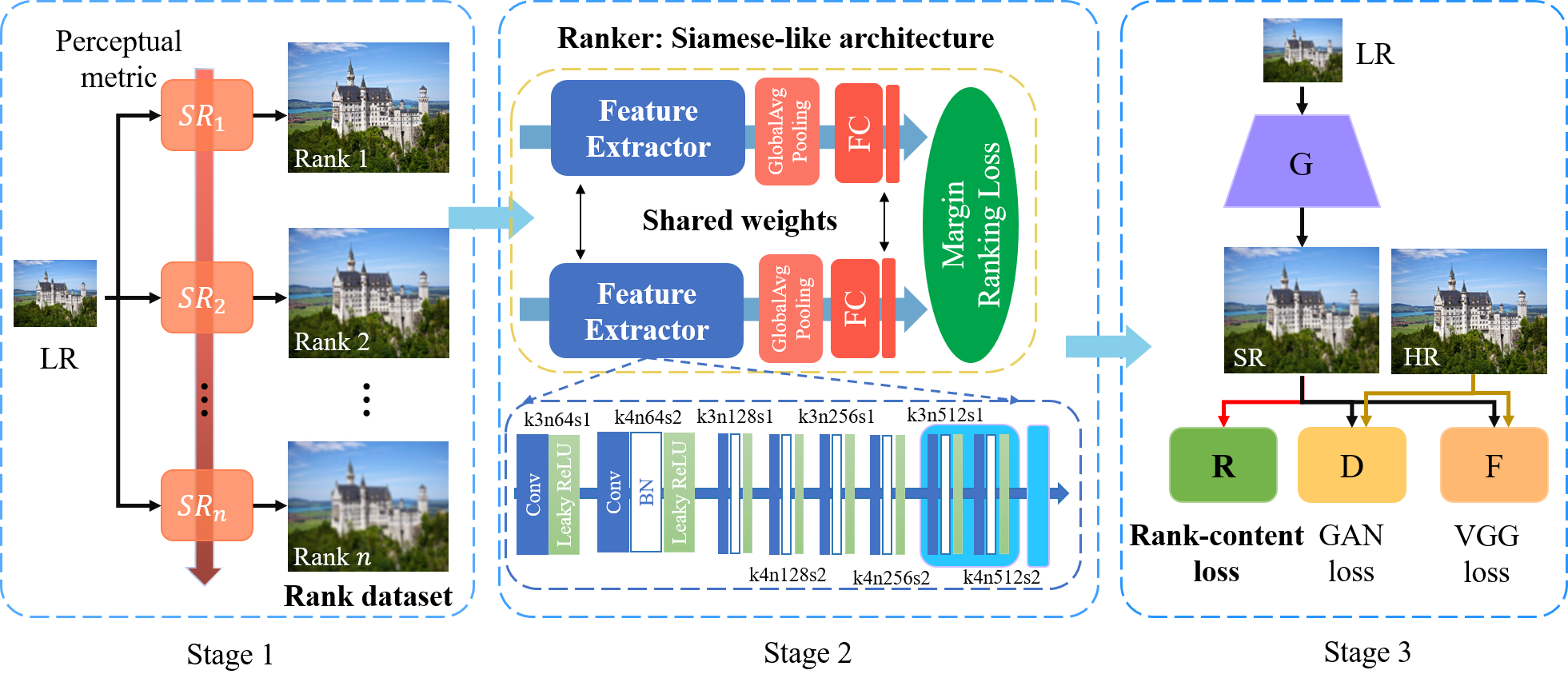

RankSRGAN Framework

The overview of the proposed RankSRGAN method:

Stage 1: Generate pair-wise rank images by different SR models in the orientation of perceptual metrics. Stage 2: Train Siamese-like Ranker network. Stage 3: Introduce rank-content loss derived from well-trained Ranker to guide GAN training. RankSRGAN consists of a generator(G), discriminator(D), a fixed Feature extractor(F) and Ranker(R)

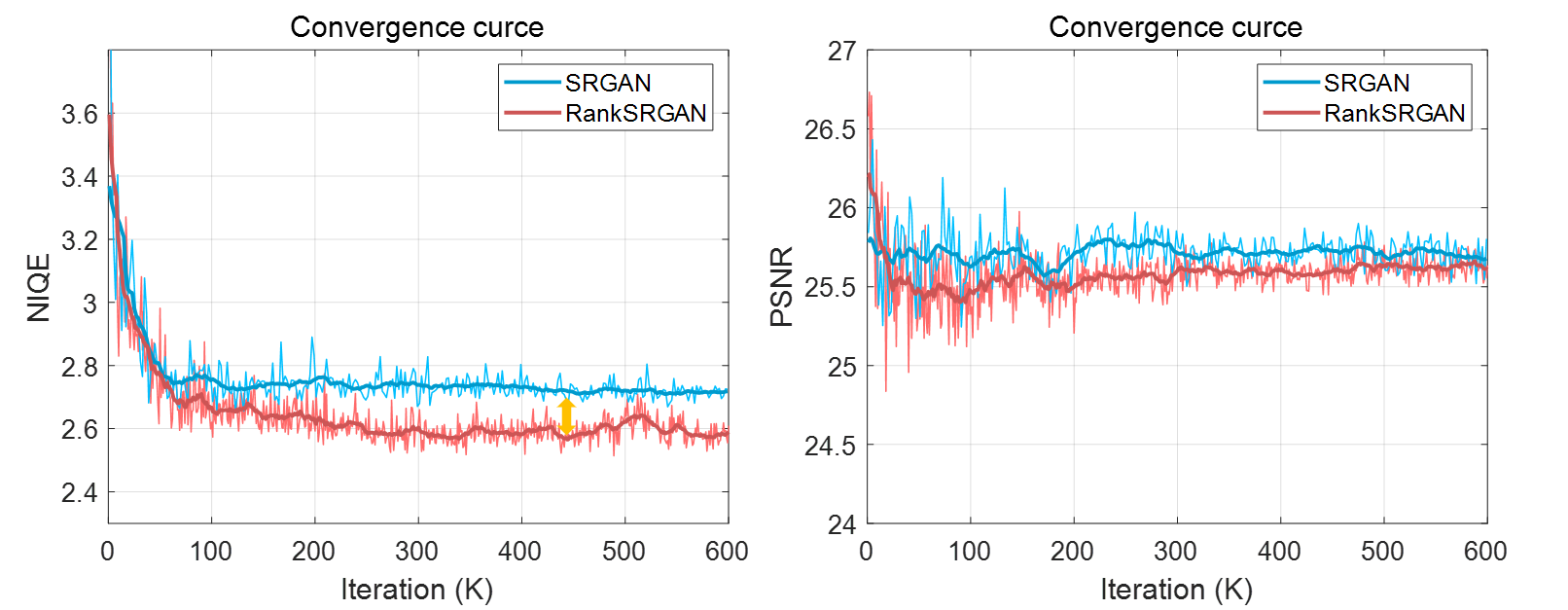

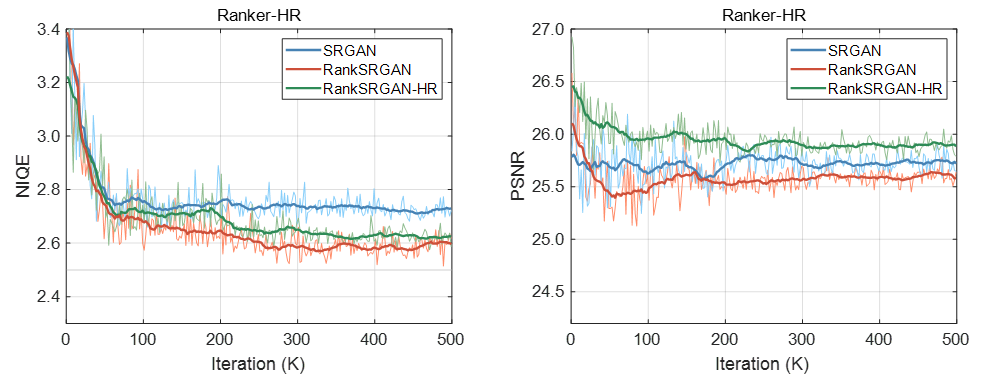

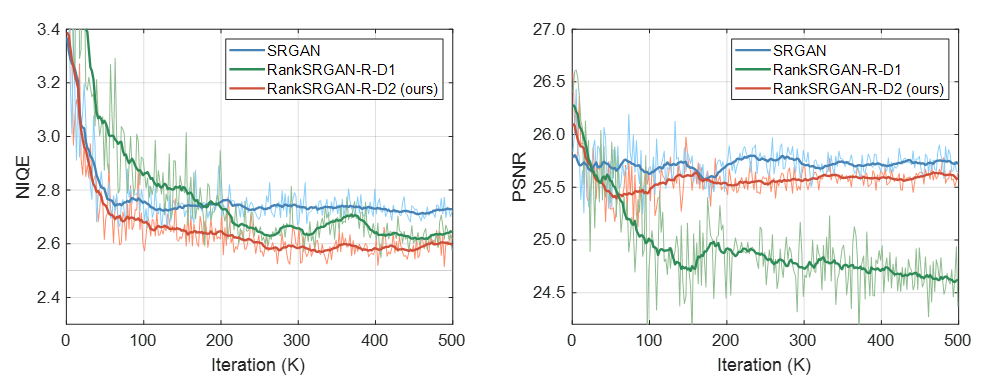

We show the convergence curves of RankSRGAN. RankSRGAN is consistently better than SRGAN by a large margin on NIQE.

Ablation Studies on RankSRGAN

1. Effect of different rank datasets

We show the convergence curves of RankSRGAN with different rank datasets in NIQE and PSNR. RankSRGAN uses the Ranker with rank dataset (SRResNet, SRGAN, ESRGAN). RankSRGAN-HR employs the Ranker with rank dataset (SRResNet, SRGAN, HR).

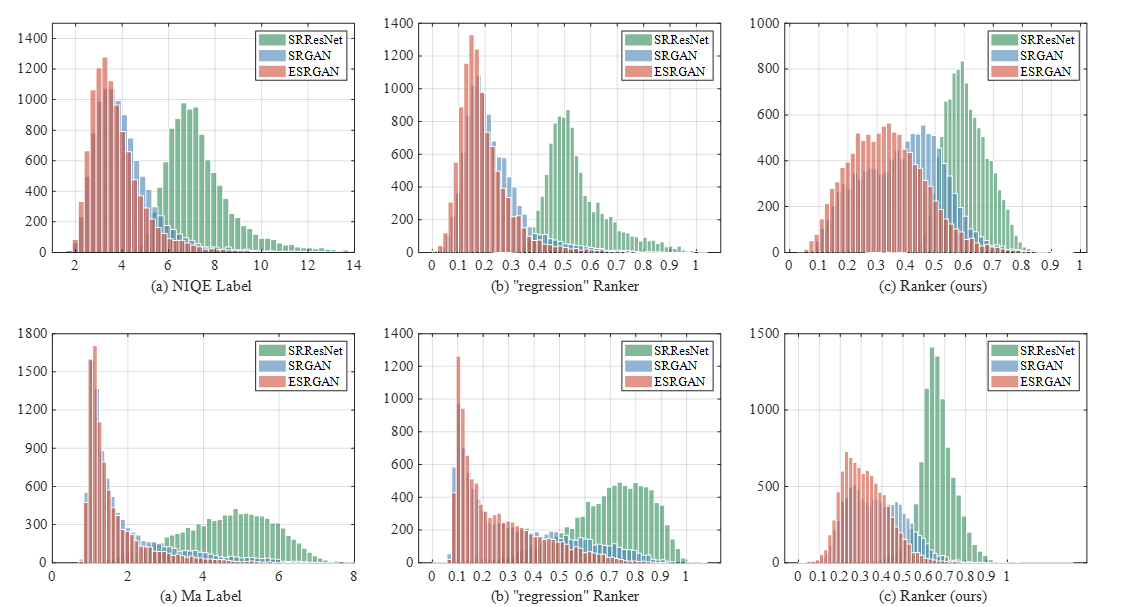

2. Effect of Ranker: Rank VS. Regression

We show the hist distribution of Ranker in NIQE and Ma metrics. The convergence curves are shown in section 3.

3. Effect of different perceptual metrics

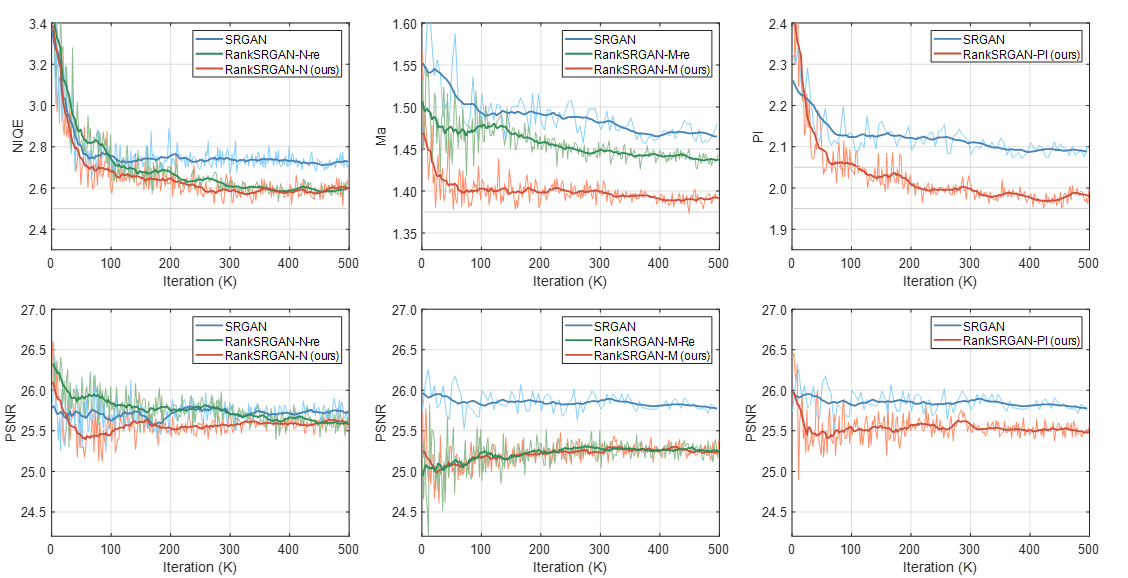

We present the convergence curves showing that our RankSRGAN can achieve a constant improvement compared with the baseline SRGAN on different perceptual metrics (NIQE, Ma and PI).

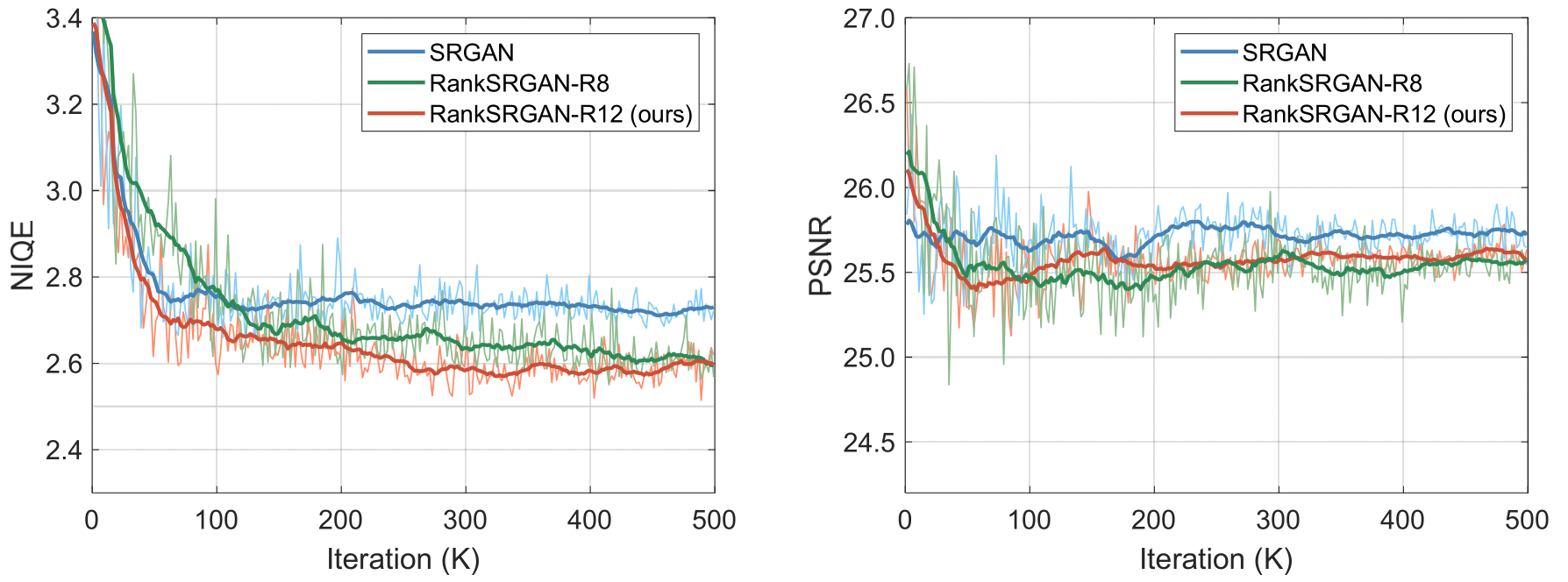

4. Effect of Ranker with different architectures

We train three VGG networks varying from shallow to deep ones: VGG-8, VGG-12 and VGG-16. Since the VGG-12 can achieve the same accuracy as VGG-16 (SROCC 0.88), we apply the VGG-8 (SROCC 0.83) and VGG-12 (SROCC 0.88) on RankSRGAN. The figure shows the performance of RankSRGAN with different Rankers. The Ranker with higher value of SROCC can achieve better performance when applied on RankSRGAN.

5. Effect of Rank dataset with different sizes

We employ DIV2K to generate rank dataset1 (D1) with 15 K image pairs and use DIV2K+Flickr2k to generate rank dataset2 (D2) with 150 K image pairs. Then, we utilize rank dataset1 and rank dataset2 to train Ranker1 (SROCC 0.78) and Ranker2 (SROCC 0.88), respectively. The convergence curves show that the Ranker2 with higher SROCC can reach better performance in NIQE and PSNR.

Results

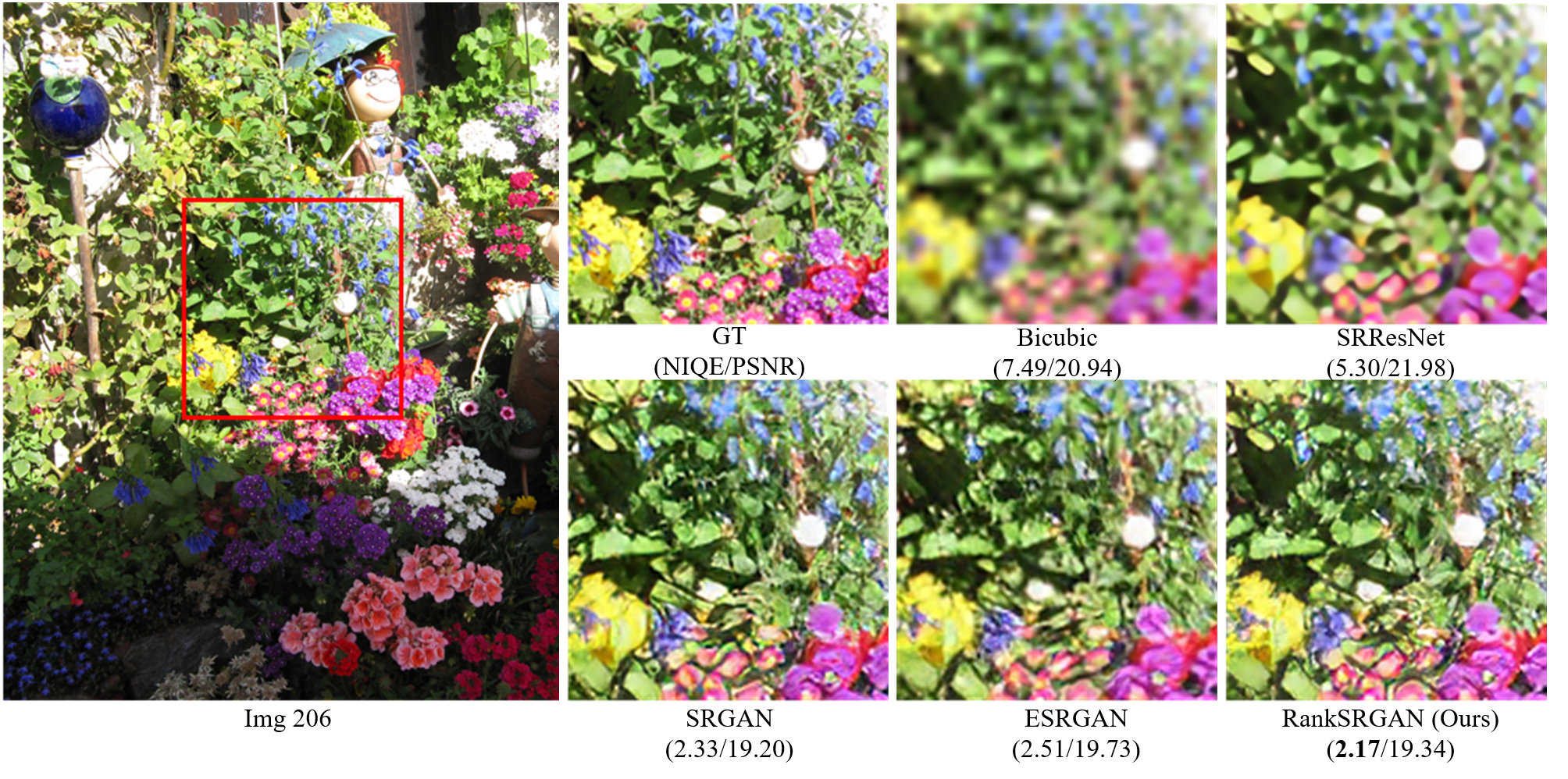

Visual results

Acknowledgement

Thanks to Xintao Wang, who discussed with us the details of ESRGAN/RankSRGAN , code architecture and user study, etc.

Contact

If you have any question, please contact Wenlong Zhang at zhangwenlong0724@gmail.com.